Predicting Wealth & Poverty with MOSAIKS¶

To predict the relative wealth of the people in a given area that was not surveyed, we will start with a geospatial foundation model called MOSAIKS. MOSAIKS stands for "MULTI-TASK OBSERVATION USING SATELLITE IMAGERY & KITCHEN SINKS" and uses random convolutional features to extract n features from a satellite image, each corresponding to a given random filter.

- Nature Paper: https://www.nature.com/articles/s41467-021-24638-z

- Website with API: https://api.mosaiks.org/portal/index/

- GitHub Repo: https://github.com/Global-Policy-Lab/mosaiks-paper

Environment Setup¶

# for fetching data

import os

import requests

from tqdm import tqdm

# for data processing

import zipfile

import glob

import pandas as pd

import numpy as np

import geopandas as gpd

# for visualization

import matplotlib.pyplot as plt

import seaborn as sns

from mpl_toolkits.basemap import Basemap

# for regression

from sklearn.model_selection import train_test_split

from sklearn.linear_model import Ridge

from sklearn.metrics import r2_score

# set the random seed for reproducibility

np.random.seed(42)

print("imported")

imported

# configure parameters

decimal_place = 0 # spatial resolution of the mosaiks embeddings in degrees of lat/long

mosaiks_data_url = f"https://api.mosaiks.org/portal/download_grid_file/coarsened_global_dense_grid_decimal_place={decimal_place}_GHS_pop_weight=True.zip"

mosaiks_data_path = f"data/mosaiks{decimal_place}/"

zip_file_path = f"{mosaiks_data_path}global_{decimal_place}.zip"

mosaiks_embeddings_file_path = f"{mosaiks_data_path}coarsened_global_dense_grid_decimal_place={decimal_place}_GHS_pop_weight=True.csv"

labels_filename = "data/dhs_final_labels.csv"

labels_centered_filename = "data/dhs_final_labels_centered.csv"

labels_merged_filename = "data/dhs_final_labels_centered_with_mosaiks.csv"

os.makedirs(mosaiks_data_path, exist_ok=True)

print("configured")

configured

Fetch Data from MOSAIKS Website¶

They have an API to extract embeddings for a given location, but it's down right now. Instead, we'll download a coarse resolution version with an embedding on a grid every 0.25 degress on Earth over land. The zipped file is about 5GB and takes a few minutes to download. We'll then unzip it so we can find the embeddings closest to our labeled data.

# Cookie for authentication, scraped from a web browser session, please do not share

# COOKIE = {

# "csrftoken": "I9x2jvGGE4se3MBa9moavDtC9o8YEgaA4Rup5ijhHJjCTRn0qRpHGJW06XG0SooG",

# "sessionid": "y44nlmh7rjrqvvxj902jc8pmw918m1p7",

# }

COOKIE = {

"ss_cvr": "eec8f713-5f28-472a-bbdc-66af1f3a229c|1765924358453|1765924358453|1765924358453|1",

"ss_cvt": "1765924358453"

}

# Function to download a file with progress bar

def download_file(url, filename):

response = requests.get(url, cookies=COOKIE, stream=True, verify=False)

# handle request

if response.status_code == 200:

total_size = int(response.headers.get("content-length", 0))

with open(filename, "wb") as f, tqdm(

desc=os.path.basename(filename),

total=total_size,

unit="B",

unit_scale=True,

unit_divisor=1024,

) as bar:

for chunk in response.iter_content(1024):

f.write(chunk)

bar.update(len(chunk))

print(f"✅ Downloaded: {filename}")

else:

print(f"❌ Failed to download {url} (Status {response.status_code})")

# Download each file

# Base URL for file downloads

print(f"📥 Downloading {mosaiks_data_url}...")

download_file(mosaiks_data_url, zip_file_path)

📥 Downloading https://api.mosaiks.org/portal/download_grid_file/coarsened_global_dense_grid_decimal_place=0_GHS_pop_weight=True.zip...

/Users/isaiah/miniconda3/envs/ml/lib/python3.14/site-packages/urllib3/connectionpool.py:1097: InsecureRequestWarning: Unverified HTTPS request is being made to host 'api.mosaiks.org'. Adding certificate verification is strongly advised. See: https://urllib3.readthedocs.io/en/latest/advanced-usage.html#tls-warnings warnings.warn( /Users/isaiah/miniconda3/envs/ml/lib/python3.14/site-packages/urllib3/connectionpool.py:1097: InsecureRequestWarning: Unverified HTTPS request is being made to host 'www.mosaiks.org'. Adding certificate verification is strongly advised. See: https://urllib3.readthedocs.io/en/latest/advanced-usage.html#tls-warnings warnings.warn( global_0.zip: 116kB [00:00, 3.60MB/s]

✅ Downloaded: data/mosaiks0/global_0.zip

# unzip all downloaded files

for zip_file in glob.glob(os.path.join(mosaiks_data_path, "*.zip")):

# Open the zip file

with zipfile.ZipFile(zip_file, "r") as z:

print(f"Extracting {zip_file} to {mosaiks_data_path}...")

# Extract all the contents into the extraction directory

z.extractall(mosaiks_data_path)

print('done unzipping!')

--------------------------------------------------------------------------- BadZipFile Traceback (most recent call last) Cell In[4], line 4 1 # unzip all downloaded files 2 for zip_file in glob.glob(os.path.join(mosaiks_data_path, "*.zip")): 3 # Open the zip file ----> 4 with zipfile.ZipFile(zip_file, "r") as z: 5 print(f"Extracting {zip_file} to {mosaiks_data_path}...") 6 # Extract all the contents into the extraction directory File ~/miniconda3/envs/ml/lib/python3.14/zipfile/__init__.py:1471, in ZipFile.__init__(self, file, mode, compression, allowZip64, compresslevel, strict_timestamps, metadata_encoding) 1469 try: 1470 if mode == 'r': -> 1471 self._RealGetContents() 1472 elif mode in ('w', 'x'): 1473 # set the modified flag so central directory gets written 1474 # even if no files are added to the archive 1475 self._didModify = True File ~/miniconda3/envs/ml/lib/python3.14/zipfile/__init__.py:1538, in ZipFile._RealGetContents(self) 1536 raise BadZipFile("File is not a zip file") 1537 if not endrec: -> 1538 raise BadZipFile("File is not a zip file") 1539 if self.debug > 1: 1540 print(endrec) BadZipFile: File is not a zip file

Prepare Wealth Data Labels to Merge with MOSAIKS Embeddings¶

Now we need to match up our locations of our wealth data with the nearest MOSAIKS locations on the global grid of embeddings. We'll do that with some decimal rounding tricks. Some labels will share the same nearest neighbor, so we'll just pick the first one that has a non-null label for asset_index.

# Load labels dataset

print("Reading in data labels...")

df = pd.read_csv(labels_filename)

# drop rows that have a NaN for asset_index

print("Dropping any rows that don't have a value for asset index...")

before_len = len(df)

df = df.dropna(subset=["asset_index"])

print(f"Started with {before_len} rows, now have {len(df)} rows")

# Assign coordinates to nearest tile centroid

print("Finding nearest MOSAIKS embedding locations...")

# for 1 degree

if decimal_place == 0:

df["lon2"] = round(round(df["lon"] + 0.5, 0) - 0.5, 1)

df["lat2"] = round(round(df["lat"] + 0.5, 0) - 0.5, 1)

# for 1/4 degree

if decimal_place == 0.25:

df["lon2"] = round((round(df["lon"]*4 + 0.5, 0) - 0.5)/4, 3)

df["lat2"] = round((round(df["lat"]*4 + 0.5, 0) - 0.5)/4, 3)

# keep just one row for every unique Lat, Lon combination

print("Dropping labels that share the same embedding location...")

before_len = len(df)

df = df.drop_duplicates(subset=["lat2", "lon2"], keep="first")

print(f"Started with {before_len} rows, now have {len(df)} rows")

# Convert to GeoDataFrame

print("Converting to a geodataframe and exporting to csv...")

gdf = gpd.GeoDataFrame(df, geometry=gpd.points_from_xy(df["lon2"], df["lat2"]), crs="EPSG:4326")

gdf.to_csv(labels_centered_filename, index=False)

print("done!")

Now let's visualize the data. It should appear in a grid pattern at 0.25 degrees.

fig, ax = plt.subplots(1, figsize=(12, 6))

m = Basemap(projection='cyl', resolution='c', ax=ax)

m.drawcoastlines()

ax.scatter(df["lon2"], df["lat2"], c=df["asset_index"], s=1)

ax.set_title('Mean Asset Index')

Merge Embeddings with Labels¶

Now that the data points have been moved to the centroids of the clusters, we can merge the embeddings with the labels.

mosaiks = pd.read_csv(mosaiks_embeddings_file_path)

mosaiks.head()

df = pd.read_csv(labels_centered_filename)

df.head()

# merge the two dataframes on the Lat, Lon columns from the labels and the lat, lon columns from the mosaiks

mosaiks = mosaiks.rename(columns={"lat": "lat2", "lon": "lon2"})

df_merged = df.merge(mosaiks, on=["lat2", "lon2"], how="left")

# save this as a csv

df_merged.to_csv(labels_merged_filename, index=False)

# inspect the data

df_merged.head()

Prep Data for Predicting Wealth Using Regression¶

- Drop any rows with missing label values

- Split into test / validation / train datasets

# read in the data

df = pd.read_csv(labels_merged_filename)

# keep just the columns we need

df = df[[col for col in df.columns if col.startswith("X_")] + ["asset_index"]]

# check for NaNs

print("Number of NaNs in df: ", df.isna().sum())

# drop rows with NaNs

df = df.dropna()

# check the shape again

print("Shape of df: ", df.shape)

# split the data into train and test sets

df_train, df_test = train_test_split(df, test_size=0.2, random_state=42)

# split the test set into val and test sets

df_val, df_test = train_test_split(df_test, test_size=0.5, random_state=42)

# check the shapes of the data

print("Shape of df_train: ", df_train.shape)

print("Shape of df_val: ", df_val.shape)

print("Shape of df_test: ", df_test.shape)

# separate the data into X and y

y_train = df_train["asset_index"]

y_val = df_val["asset_index"]

y_test = df_test["asset_index"]

# x is all the data in columns with names formatted like "X_1"

X_train = df_train[[col for col in df.columns if col.startswith("X_")]]

X_val = df_val[[col for col in df.columns if col.startswith("X_")]]

X_test = df_test[[col for col in df.columns if col.startswith("X_")]]

Run Linear Classifier using Ridge Regression¶

Now that we have our embeddings and our labels, we can run a linear classifier on top of the embeddings to see how well each model does at predicting poverty and wealth. We will use Ridge regression to do this, since it is a linear model that can handle high dimensional data well. It uses L2 regularization to prevent overfitting, penalizing large weights. You can learn more about it in this Medium post or in this tutorial from Analytics Vidhya.

# Define alpha values to loop over

alpha_values = [10**i for i in range(-5, 4)] # Corrected alpha values from 0.001 to 1000

# Create subplots

fig, axes = plt.subplots(3, 3, figsize=(15, 10))

axes = axes.ravel()

for i, alpha in enumerate(alpha_values):

ridge_model = Ridge(alpha=alpha)

ridge_model.fit(X_train, y_train)

y_pred = ridge_model.predict(X_val)

r2 = r2_score(y_val, y_pred)

# Create scatter plot using Seaborn

sns.scatterplot(x=y_val, y=y_pred, ax=axes[i], s=10)

axes[i].plot([-4, 4], [-4, 4], "r--") # 45-degree line

# Fit a regression line with confidence interval

sns.regplot(x=y_val, y=y_pred, ax=axes[i], scatter=False, ci=95, line_kws={"color": "blue"})

axes[i].set_title(f"Validation Performance, Alpha={alpha}, R²={r2:.2f}")

axes[i].set_xlabel("Asset Index (True)")

axes[i].set_ylabel("Asset Index (Predicted)")

plt.tight_layout()

plt.show()

We can see that the top performing value for alpha on the validation dataset is 0.01. For a final test of the model, let's run this regression model trained on the test data, tuned on the validation, and see how well it performs on the test data.

# Select the best alpha value from the validation data

alpha = 0.01

# Train Ridge regression model on training data

ridge_model = Ridge(alpha=alpha)

ridge_model.fit(X_train, y_train)

coefficients = ridge_model.coef_

# Use coefficients from training for inference on test data

y_pred = X_test @ coefficients + ridge_model.intercept_

r2 = r2_score(y_test, y_pred)

# Create scatter plot using Seaborn

plt.figure(figsize=(12, 6))

sns.scatterplot(x=y_test, y=y_pred, s=10)

plt.plot([-4, 4], [-4, 4], "r--") # 45-degree line

# Fit a regression line with confidence interval

sns.regplot(x=y_test, y=y_pred, scatter=False, ci=95, line_kws={"color": "blue"})

plt.title(f"Test Data Peformance, Alpha={alpha}, R²={r2:.2f}")

plt.xlabel("Asset Index (True)")

plt.ylabel("Asset Index (Predicted)")

plt.show()

Global Wealth Prediction¶

Now that we have a model that can predict wealth, let's use it to predict wealth across the entire globe. We will use the MOSAIKS API to get embeddings for the entire world, and then use our model to predict wealth for each location.

# Retrain Ridge regression model on training data

print("Retraining ridge model on training data...")

alpha = 0.01

ridge_model = Ridge(alpha=alpha)

ridge_model.fit(X_train, y_train)

coefficients = ridge_model.coef_

print("reading in the global dataset...")

# read in the global dataset of mosaiks embeddings

mosaiks = pd.read_csv(mosaiks_embeddings_file_path)

print("read in dataset")

lat_global = mosaiks["lat"]

lon_global = mosaiks["lon"]

# pull out the embeddings from the dataframe, columns that begin with "X_"

X_global = mosaiks.drop(columns=["lat", "lon", "continent"])

print("extracted just embeddings")

# Select the best alpha value from the validation data

alpha = 0.01

# Train Ridge regression model on training data

coefficients = ridge_model.coef_

print("fitted ridge model to training data")

# Use coefficients from training for inference on test data

print("running inference on the global data...")

y_pred_global = X_global @ coefficients + ridge_model.intercept_

print("inference complete")

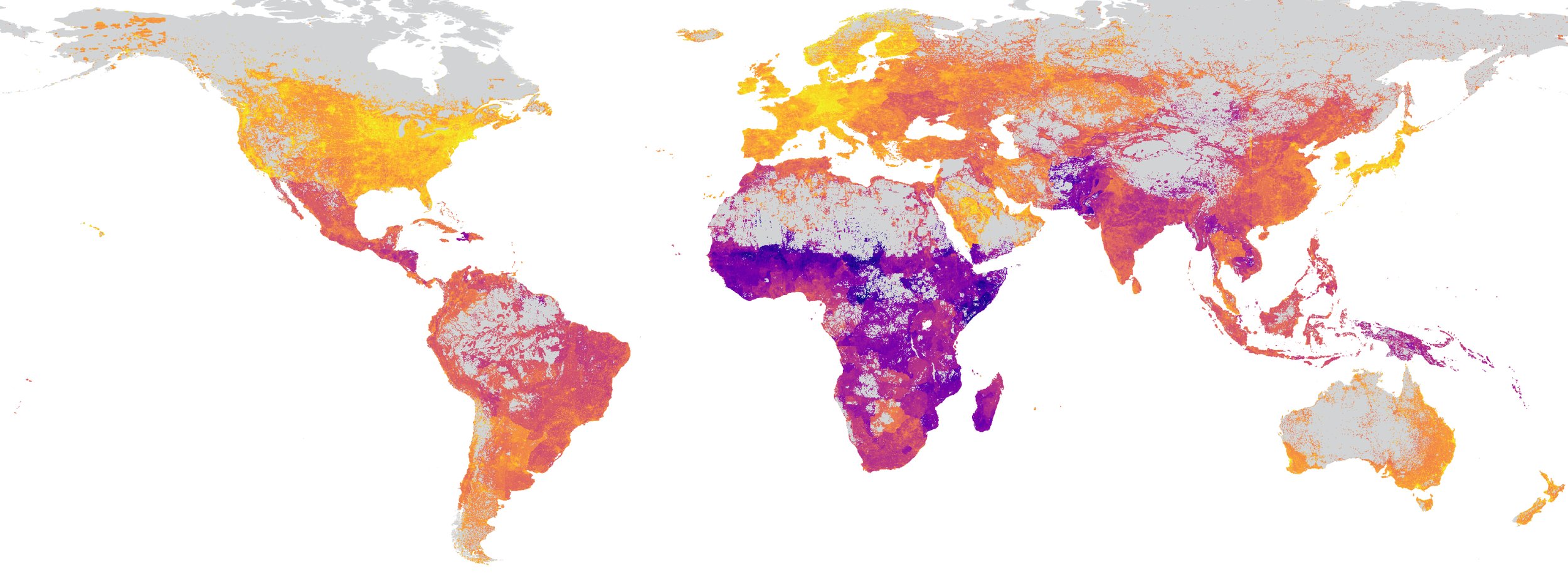

Visualize the global predictions.

# Merge predictions with lat/lon, name prediction column "pred"

df_global = pd.concat([lat_global, lon_global, pd.Series(y_pred_global)], axis=1)

df_global.columns = ["lat", "lon", "pred"]

# Convert to GeoDataFrame

gdf_global = gpd.GeoDataFrame(df_global, geometry=gpd.points_from_xy(df_global["lon"], df_global["lat"]), crs="EPSG:4326")

fig, ax = plt.subplots(1, figsize=(12, 6))

m = Basemap(projection='cyl', resolution='c', ax=ax)

m.drawcoastlines()

sc = ax.scatter(gdf_global["lon"], gdf_global["lat"], c=gdf_global["pred"], s=2, cmap='viridis')

cb = fig.colorbar(sc, ax=ax, orientation='vertical', label='Predicted Mean Asset Index')

ax.set_title('Mean Asset Index Global Prediction')

plt.show()

Assignment¶

Answer the following questions based on the regression figures above:

- What's going on with the small alpha values? Why are most data points squished up towards the top? Why is there such a strong outlier?

- What do you think is going on with the large alpha values? Why does the R-squared value decrease again?

- The red line shows a 45-degree line of a theoretical perfect fit. The blue line shows an approximation of the actual fit. Why is the blue line consistently flatter than the red line? What does this mean for our model? How could we improve that?

What do you make of our global inference map? Does it match up with your intuition of wealthy and poor areas of the world? Do you think this is a valid approach for making a global inference? What pitfalls might this approach be prone to?

Pick another variable from the SustainBench poverty dataset and repeat the analysis above. Do you see an even stronger correlation with MOSAIKS embeddings? A weaker one? Why do you think that is?

Bonus Assignment 1: Redo Analysis Higher Resolution Embeddings¶

Instead of using the low-resolution embeddings aggregated to every 0.25 degrees, we have the original embeddings at every 0.01 degrees for each of the points in the SustainBench dataset in Kaggle here. Thanks to Luke Sherman from Stanford for pulling them for us even though the MOSAIKS API was down. How does the spatial resolution of the DHS clusters and the dense MOSAIKS embeddings compare? Repeat the analysis using the dense embeddings. How does the accuracy compare with the coarse resolution embeddings?

Bonus Assignment 2: Geospatial Errors¶

Associate the errors in the model above with the original geospatial datapoints in the SustainBench dataset and plot them on a map. Do you see any patterns? Are there any areas of the map that perform worse than others? If so, what do you think you could do to reduce the geographic bias of the model?

Convert Notebook to HTML¶

# supress warnings

import warnings

warnings.filterwarnings("ignore")

# export to HTML for webpage

import os

os.system('jupyter nbconvert --to html pt2-mosaiks.ipynb --HTMLExporter.theme=dark')

[NbConvertApp] Converting notebook pt2-mosaiks.ipynb to html [NbConvertApp] Writing 347418 bytes to pt2-mosaiks.html

0