CNN with the MNIST Dataset¶

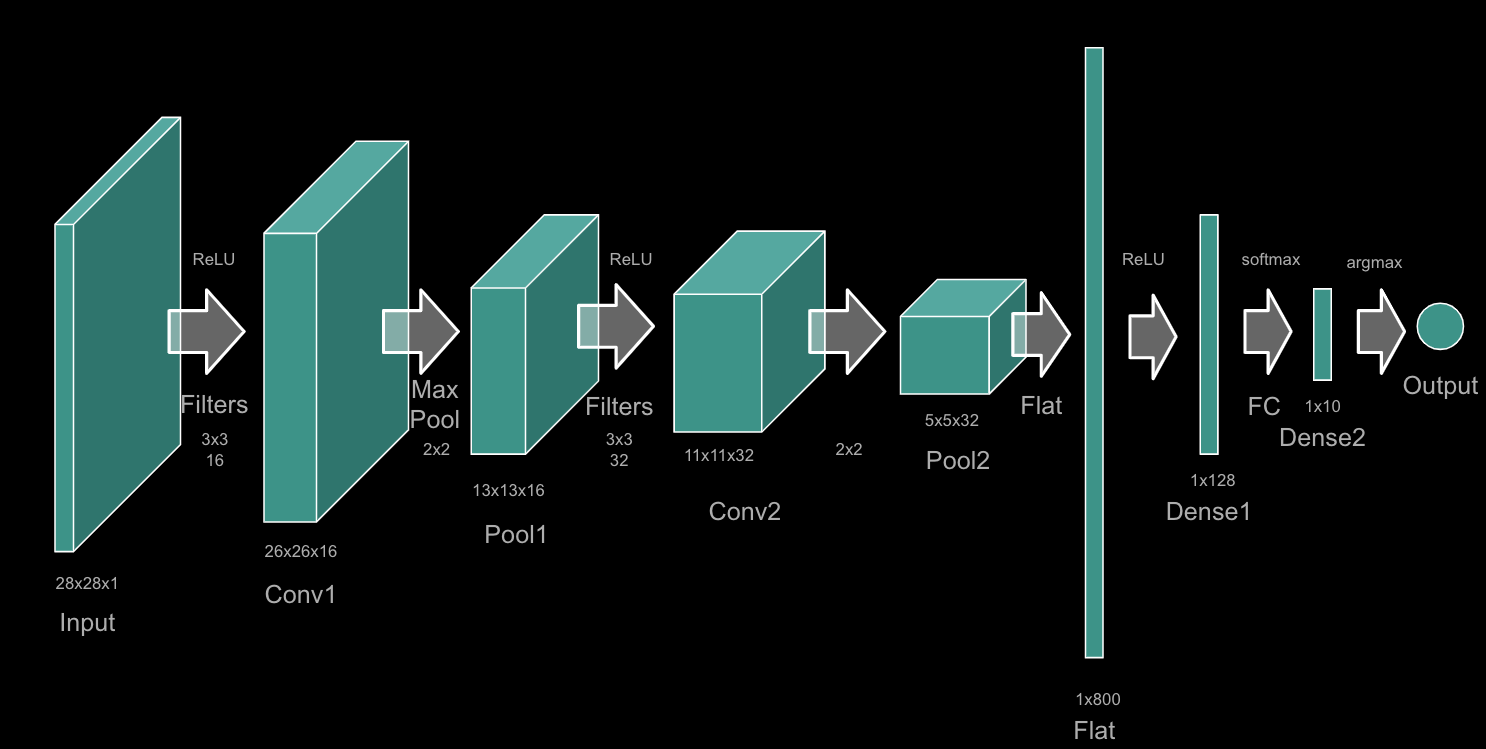

Here, I build a simple convolutional neural network (CNN) to classify the MNIST digits dataset. I use the Keras API with the TensorFlow backend. I use the sequential model with two convolutional layers and two max pooling layers. I then flatten the output of the second max pooling layer and use two dense layers to classify the digits. I use the Adam optimizer and the sparse categorial cross entropy loss function.

In addition, I illustrate the raw data as both a matrix and as an image. I also illustrate the dataset sizes. Finally, I illustrate the accuracy and loss of the model as a function of the epoch number and a confusion matrix of the results.

Below is a diagram of the CNN model.